“Come on guys, let’s be serious. If you really want to do something, don’t just ‘like’ this post. Write that you are ready, and we can try to start something.” Mustafa Nayem, a Ukrainian journalist, typed those words into his Facebook account on the morning of November 21st 2013. Within an hour his post had garnered 600 comments. That prompted Mr Nayem to write again, calling on his followers to gather later that day on the Maidan square in Kiev. Three months later Ukraine’s president, Viktor Yanukovych, was removed.

At the time, this did not seem all that remarkable. From the protests around the Iranian elections of 2009 onwards, the role of Facebook and Twitter in political uprisings in dodgy countries had been prominent and celebrated. The social-media-fuelled movements often failed, in the end, to achieve much; five months before Mr Nayem put up his post the army had reestablished its power in Egypt, where social media had been crucial to the downfall of General Hosni Mubarak in 2011. But the idea had taken hold that, by connecting people and giving them a voice, social media had become a global force for plurality, democracy and progress.

A few months after the “Euromaidan” protests brought down Mr Yanukovych, a less widely noticed story provided a more disturbing insight into the potential political uses of social media. In August 2014 Eron Gjoni, a computer scientist in America, published a long, rambling blog post about his relationship with Zoe Quinn, a computer-game developer, appearing to imply she had slept with a journalist to get favourable coverage of her new game, “Depression Quest”. The post was the epicentre of “Gamergate”, a misogynistic campaign in which mostly white men keen to bolster each other’s egos let rip against feminists and all the other “social justice warriors” they despised in the world of gaming and beyond. According to some estimates, more than 2m messages with the hashtag #gamergate were sent in September and October 2014.

The campaign used the entire spectrum of social-media tools. Videos, articles and documents leaked to embarrass enemies—a practice known as doxing—were posted to YouTube and blogs. Twitter and Facebook circulated memes. Most people not directly involved were able to ignore it; crucially, the mainstream media, when they noticed it, misinterpreted it. They took Gamergate to be a serious debate, in which both sides deserved to be heard, rather than a right-wing bullying campaign.

Looking at the role that social media have played in politics in the past couple of years, it is the fake-news squalor of Gamergate, not the activist idealism of the Euromaidan, which seems to have set the tone. In Germany the far-right Alternative for Germany party won 12.6% of parliamentary seats in part because of fears and falsehoods spread on social media, such as the idea that Syrian refugees get better benefits than native Germans. In Kenya weaponised online rumours and fake news have further eroded trust in the country’s political system.

This is freaking some people out. In 2010 Wael Ghonim, an entrepreneur and fellow at Harvard University, was one of the administrators of a Facebook page called “We are all Khaled Saeed”, which helped spark the Egyptian uprising centred on Tahrir Square. “We wanted democracy,” he says today, “but got mobocracy.” Fake news spread on social media is one of the “biggest political problems facing leaders around the world”, says Jim Messina, a political strategist who has advised several presidents and prime ministers.

Governments simply do not know how to deal with this—except, that is, for those that embrace it. In the Philippines President Rodrigo Duterte relies on a “keyboard army” to disseminate false narratives. His counterpart in South Africa, Jacob Zuma, also benefits from the protection of trolls. And then there is Russia, which has both a long history of disinformation campaigns and a domestic political culture largely untroubled by concerns of truth. It has taken to the dark side of social media like a rat to a drainpipe, not just for internal use, but for export, too.

Vladimir Putin’s regime has used social media as part of surreptitious campaigns in its neighbours, including Ukraine, in France and Germany, in America and elsewhere. At outfits like the Internet Research Agency professional trolls work 12-hour shifts. Russian hackers set up bots by the thousand to keep Twitter well fed with on-message tweets (they have recently started to tweet assiduously in support of Catalan independence). Sputnik and RT, the government-controlled news agency and broadcaster, respectively, provide stories for the apparatus to spread. During the French election this year an article by Sputnik prominently featured the results of an unrepresentative social-media study by Brand Analytics, a research firm based in Moscow, putting conservative candidate François Fillon at the head of the field.

These stories and incendiary posts bounce between social networks, including Facebook, its subsidiary Instagram, and Twitter. They often perform better than content from real people and media companies. Bots generated one out of every five political messages posted on Twitter in America’s presidential campaign last year. The RAND Corporation, a think-tank, calls this integrated, purposeful system a “firehose of falsehood”.

On November 1st representatives of Facebook, Google and Twitter fielded hostile questions on Capitol Hill about the role they played in helping that firehose drench American voters. The hearings were triggered by reports that during the 2016 campaign Russian-controlled entities bought ads and posted content about divisive political issues that spread virally, in an attempt to sow discord. Facebook has estimated that Russian content on its network, including posts and paid ads, reached 126m Americans, around 40% of the nation’s population.

Given the concentration of power in the market—Facebook and Google account for about 40% of America’s digital content consumption, according to Brian Wieser of Pivotal Research, a data provider—such questions are well worth worrying about. But the concerns about social media run deeper than the actions of specific firms or particular governments.

Social media are a mechanism for capturing, manipulating and consuming attention unlike any other. That in itself means that power over those media—be it the power of ownership, of regulation or of clever hacking—is of immense political importance. Regardless of specific agendas, though, it seems to many that the more information people consume through these media, the harder it will become to create a shared, open space for political discussion—or even to imagine that such a place might exist.

Years ago Jürgen Habermas, a noted German philosopher, suggested that while the connectivity of social media might destabilise authoritarian countries, it would also erode the public sphere in democracies. James Williams, a doctoral student at Oxford University and a former Google employee, now claims that “digital technologies increasingly inhibit our ability to pursue any politics worth having.” To save democracy, he argues, “we need to reform our attention economy.”

The idea of the attention economy is not new. “What information consumes is rather obvious: it consumes the attention of its recipients,” Herbert Simon, a noted economist, wrote in 1971. A “wealth of information,” he added, “creates a poverty of attention.” In “The Attention Merchants”, published in 2016, Tim Wu of Columbia University explains how 20th-century media companies hoovered up ever more of this scarce resource for sale to advertisers, and how Google and its ilk have continued the process.

What are you paying with?

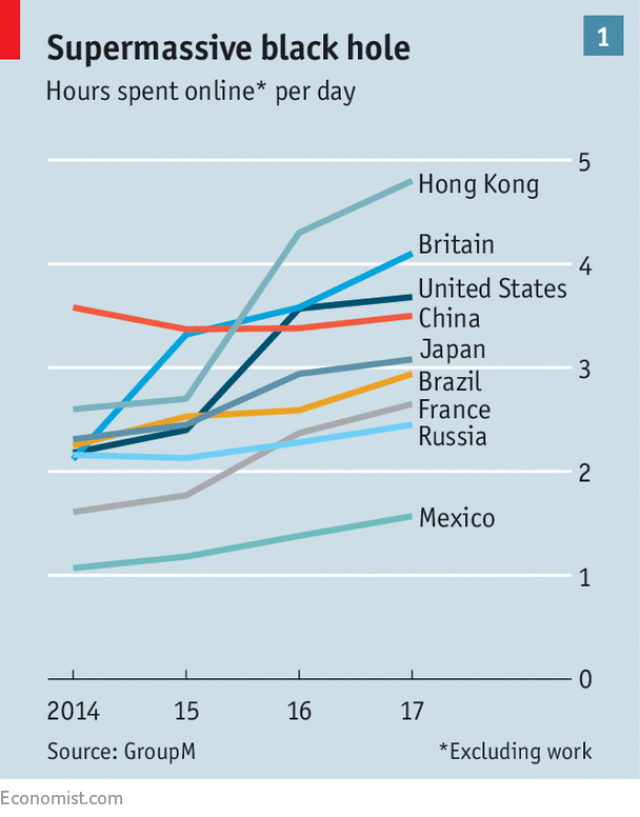

Social media have revolutionised this attention economy in two ways. The first is quantitative. New services and devices have penetrated every nook and cranny of life, sucking up more and more time (see chart 1). The second is qualitative. The new opportunity to share things with the world has made people much more active solicitors of attention, and this has fundamentally shifted the economy’s dynamics.

Interface designers, app-makers and social-media firms employ armies of designers to keep people coming back, according to Tristan Harris, another ex-Googler and co-founder of an advocacy group called “Time Well Spent”. Notifications signalling new followers or new e-mails beg to be tapped on. The now ubiquitous “pull-to-refresh” feature, which lets users check for new content, has turned smartphones into slot machines.

Adult Americans who use Facebook, Instagram and WhatsApp spend around 20 hours a month on the three services. Overall, Americans touch their smartphones on average more than 2,600 times a day (the heaviest users easily double that). The population of America farts about 3m times a minute. It likes things on Facebook about 4m times a minute.

The average piece of content is looked at for only a few seconds. But it is the overall paying of attention, not the specific information, that matters. The more people use their addictive-by-design social media, the more attention social-media companies can sell to advertisers—and the more data about the users’ behaviour they can collect for themselves. It is an increasingly lucrative business to be in. On November 1st Facebook posted record quarterly profits, up nearly 80% on the same quarter last year. Combined, Facebook and Alphabet, Google’s parent company, control half the world’s digital advertising.

In general, the nature or meaning of the information being delivered does not matter all that much, as long as some attention is being paid. But there is one quality on which the system depends: that information gets shared.

People do not share content solely because it is informative. They share information because they want attention for themselves, and for what the things they share say about them. They want to be heard and seen, and respected. They want posts to be liked, tweets to be retweeted. Some types of information spread more easily this way than others; they pass through social-media networks like viruses—a normally pathological trait which the social-media business is set up to reward.

Because of the data they collect, social-media companies have a good idea of what sort of things go viral, and how to tweak a message until it does. They are willing to share such insights with clients—including with political campaigns versed in the necessary skills, or willing to buy them. The Leave campaign in Britain’s 2016 Brexit referendum was among the pioneers. It served about 1bn targeted digital advertisements, mostly on Facebook, experimenting with different versions and dropping ineffective ones. The Trump campaign in 2016 did much the same, but on a much larger scale: on an average day it fed Facebook between 50,000 and 60,000 different versions of its advertisements, according to Brad Parscale, its digital director. Some were aimed at just a few dozen voters in a particular district.

Perhaps the most subversive techniques, though, are those developed in somewhat obsessive and technically astute coteries of amateurs whose main motivation is fun and recognition, sometimes—but not necessarily—spiked with malice. The internet has always benefited from the attention of such people. In an article entitled “Hacking the Attention Economy” danah boyd (she spells her name in lower case letters), the president of Data & Society, a think-tank, looks at their impact on social media.

In the mid-2000s members of 4chan, a messaging board first used to share manga, anime and pornography, started to explore new ways to manipulate nascent social media. They took particular pleasure in generating “memes”: funny images—often of cats, combined with a clever caption (a genre known as LOLcats)—which could spread like wildfire. They gamed online polls such as the one organised by Time to find the world’s most important people. Ms boyd describes the ways in which such hacks turned political. On and around 4chan, groups which had mostly been excluded from the mainstream media, from white nationalists to men’s rights activists, developed the dark arts they would use to further their agendas. Gamergate was their coming-out party.

The pressure to go vegan

To work at the level of the population as a whole, such social-media operations cannot stand alone. They need mechanisms which can amplify messages developed online, provide the illusion of objectivity, and validate people’s beliefs. Analysis of sharing on Twitter and Facebook, and direct links between stories, shows that a specific subset of America’s media now performs this role for the country’s right wing. Centred on Breitbart, a publication now again run by Steve Bannon, a former adviser to President Donald Trump, and Fox News, a cable channel, this “ecosystem” includes hundreds of other online-only news sites, from Conservapedia, a right-wing Wikipedia, to Infowars, which peddles conspiracy theories. There is a left-wing media ecosystem, too, but it is much less diversified and dominated by mainstream publications, such as the New York Times and CNN.

In France the right-wing ecosystem is called the fachosphere and includes such sites as Fdesouche and Égalité et Réconciliation. Nearly half the links shared during the presidential campaign led to “alternative” sources, according to Bakamo.Social, a consultancy. Although smaller, Germany’s right-wing Paralleluniversum, populated by the likes of Epoch Times and Kopp Online, is gaining ground, says Stiftung Neue Verantwortung, a think-tank.

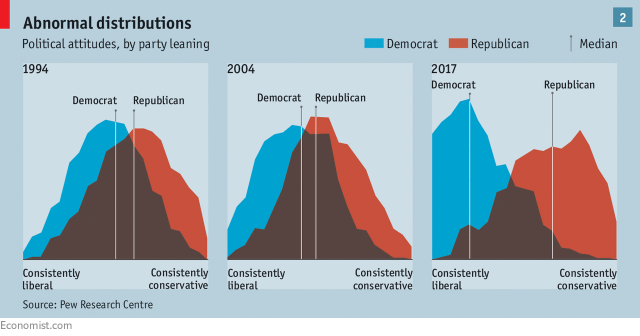

Such ecosystems are a symptom of political polarisation. They also drive it further. The algorithms that Facebook, YouTube and others use to maximise “engagement” ensure users are more likely to see information that they are liable to interact with. This tends to lead them into clusters of like-minded people sharing like-minded things, and can turn moderate views into more extreme ones. “It’s like you start as a vegetarian and end up as a vegan,” says Zeynep Tufekci of the University of North Carolina at Chapel Hill, describing her experience following the recommendations on YouTube. These effects are part of what is increasing America’s political polarisation, she argues (see chart 2).

When putting these media ecosystems to political purposes, various tools are useful. Humour is one. It spreads well; it also differentiates the in-group from the out-group; how you feel about the humour, especially if it is in questionable taste, binds you to one or the other. The best tool, though, is outrage. This is because it feeds on itself; the outrage of others with whom one feels fellowship encourages one’s own. This shared outrage reinforces the fellow feeling; a lack of appropriate outrage marks you out as not belonging. The reverse is also true. Going into the enemy camp and posting or tweeting things that cause them outrage—trolling, in other words—is a great way of getting attention.

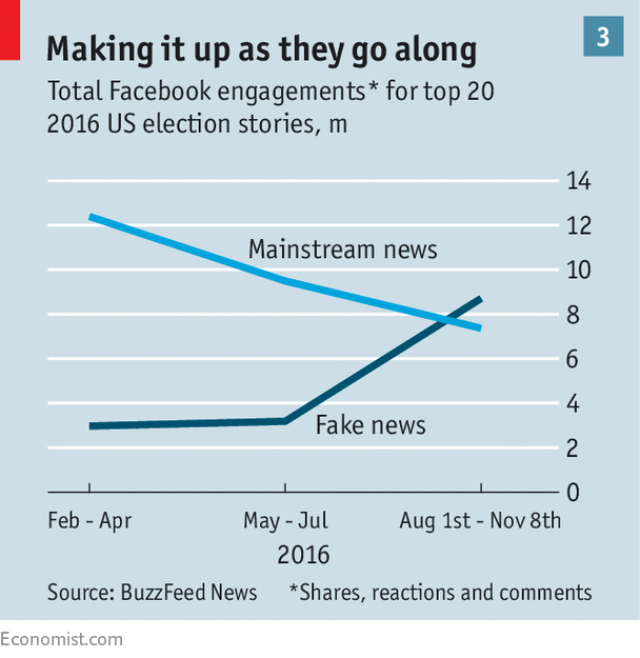

Outrage and humour are thus politically powerful; indeed, they played big roles in mobilisations such as that of Tahrir Square and the Euromaidan. They are also easily integrated into rumour campaigns, such as the one about Hillary Clinton’s health in 2016. In August 2016 far-right bloggers started circulating theories that she was suffering from seizures and was physically weak but that, outrageously, the Democrats and their allies in the mainstream media were covering this up.

Video clips mocking her past coughing fits made the rounds online. Conservative media, such as the Drudge Report and Fox News, picked up the story, mainly by asking there’s-smoke-is-there-fire questions. From there it made it into more liberal mainstream outlets. Ms Clinton saw herself compelled to deny the rumours—only to see them gain strength when she indeed developed pneumonia. As the campaign went on, the amount of play such stories got on social media increased (see chart 3).

It would be easy to dismiss such hacks as mere pranks, as misfits showing off their hacking capabilities to one another and the world, just as they did ten years ago on 4chan. But backed up by an alternative media ecosystem keen to support them, and with judicious help from foreign powers capable of organising themselves a little more thoroughly than ragtag mobocrats, they can become powerful. Although the facts quickly supersede the fictions, once an idea is out there, it tends to linger. Efforts to debunk fake news often don’t spread as far, or through the same networks: indeed, they may well be ignored because they come from mainstream media, which many no longer trust. And even if they are not, they are never as engaging as the rumours they seek to replace.

The biggest attention hacker is none other than Mr Trump himself. When he sends one of his outrageous tweets, often adroitly timed to distract from some other controversy, the world pays pathological levels of attention. The president is today’s attention economy made flesh. He reads as little as possible, gets most of his news from cable television, retweets with minimal thought, and his humour makes it very clear what in-group he is in with. Above all, he loves outrage—both causing it and feeling it.

Being this thoroughly part of the system makes Mr Trump eminently hackable. His staff, it is said, compete to try and get ideas they want him to take on board into media they know he will be exposed to. Outsiders can play the game, too. In 2015 enterprising enemies set up a Twitter bot dedicated to sending him tweets with unattributed quotes from Benito Mussolini, Italy’s fascist dictator. Last year Mr Trump finally retweeted one: “It is better to live one day as a lion than 100 years as a sheep.” Cue Trump-is-a-fascist outrage.

The tension of history

Social media are hardly the first communication revolution to first threaten, then rewire the body politic. The printing press did it (see our essay on Luther). So did television and radio, allowing conformity to be imposed in authoritarian countries at the same time as, in more open ones, promoting the norms of discourse which enabled the first mass democracies.

In those democracies broadcasters were often strictly regulated on the basis that the airwaves they used were a public good of limited capacity. One strong argument for not regulating the internet, heard a lot in the 1990s, was that this scarcity of spectrum no longer applied—the internet’s capacity was limitless. Seeing things through the lens of the attention economy, though, suggests that this distinction may not be as sharp as once it seemed. As Simon said, people’s attention—for which, in internet-speak, bandwidth is often used as a metaphor—is scarce.

But there is a raft of problems with justifying greater regulation on these grounds. One is ignorance, and the risk of perverse outcomes that flows from it. As Rasmus Nielsen of Oxford and Roskilde universities argues, not enough is known about the inner workings of social media to come up with effective regulations.

This is in part the fault of the tech companies, which have been less than generous with information about their business. For a firm which used to say its mission was to make the world more open and connected, Facebook is strikingly closed and isolated. It collaborates with researchers looking at the dynamics of social media, but only allows them to publish results, not underlying data. It has been even less willing than Google to share details about how it decides to recommend content or target ads. Both firms have also lobbied to avoid disclosure rules for political ads that conventional media have to comply with, arguing that digital ads lack the space to make clear who paid for a campaign.

Correctly perceiving that public opinion is turning, the companies now say they will be more forthcoming. Facebook and Twitter have volunteered to show the source of any ads that appear in subscribers’ news feeds and develop tools so people can see all the ads that the social-media companies serve to their customers. Whether that is enough to head off legislation remains to be seen: in America a group of senators has introduced the “Honest Ads Act”, which would extend the rules that apply to print, radio and television to social media. Proponents hope it will become law before the 2018 mid-term election.

Some, such as Mr Ghonim, the former Egyptian activist, say that the “Honest Ads Act” does not go far enough. He wants Facebook and other big social-media platforms to be required to maintain a public feed that provides a detailed overview of the information distributed on their networks, such as how far a piece of content has spread and which sorts of users have seen it. “This would allow us to see what is really happening on these platforms,” says Mr Ghonim. Such demands for transparency would extend to requirements to label bots and fake accounts, so users can understand who is behind a message.

Other proposals go beyond transparency. Increased friction is one suggestion, offering users pop-ups with warnings along the lines of: “Do you really want to share this? This news item has been found to be false.” Social-media firms could also start redirecting people to calmer content after they have been engaging with stories that are negative or hostile. YouTube has experimented with redirecting jihadists away from extremist videos to content that contradicts what they have been watching.

Other proposals aim less at dynamics, more at content. As of this October Germany has required social-media companies to take down hate speech, such as Holocaust denial, and fake news within 24 hours or face fines of up to €50m ($58m). The sheer volume of content—more than 500,000 comments are posted on Facebook every minute—makes such policing hard. It is possible, though, that a mixture of better algorithms and more people could achieve something. Facebook has already agreed to hire a few thousand people for this task; it may need a lot more.

Free-speech advocates cringe at the thought that Facebook should be allowed to become the “ministry of truth”—or, for that matter, that companies might surreptitiously steer users activity to quieten them down when they have been angry. As Mr Habermas argues, there is a real value to the openness social media and the internet can bring in restrictive societies. And being angry and unsettled—even outraged—should be a part of that. Some point to China, where it is reported that more than 2m moderators, most employed by social-media firms, scour the networks, erring on the side of caution when they see something that may displease official censors.

A more far-out proposal, which Ms Tufekci favours, is to require social-media firms to change their business model in some way, making their money, perhaps, directly from users, rather than from advertisers. Others argue that the social-media platforms which dominate the attention economy have become utilities and should no longer be run as profit-maximising companies. Mr Wu, the author of “The Attention Merchants”, wants Facebook to become a “public benefit corporation”, obliged by law to aid the public. Wikipedia, an online encyclopedia, could be seen as the model: it lives off donations and its host of volunteers keeps it reasonably clean, honest and reliable. None of this, though, offers a truly satisfactory response to the problem.

Another of Mussolini’s sayings—not yet retweeted by Mr Trump—was that “Democracy is beautiful in theory; in practice it is a fallacy.” As with his preference for the leonine over the sheepish, it is sometimes hard not to sympathise with this, at least a bit. But if, like all political systems, democracy has proved eminently fallible, it has shown itself robustly superior to the rest when it comes to fixing those failings and making good when faced by change. On that basis, history suggests it should be able to weather the storms of social media. But it will not be easy. And, as with books and broadcasts, the process is likely to transform, at least in part, that which it seeks to preserve.

Published on The Economist

published: 18. 12. 2017